Artificial intelligence in 2026 is no longer about experimentation or hype cycles. It’s about execution. Platforms that succeed with AI now do so because they are structurally prepared — technically, organizationally, and ethically. Those that struggle usually don’t lack ambition; they lack readiness.

The 2026 AI Readiness Scorecard is a rapid self-audit designed to help organizations evaluate whether their platform can support modern AI systems at scale. In just 15 minutes, it exposes strengths, risks, and blind spots that often remain hidden until failure occurs.

This article explains how the scorecard works, what it measures, and why it matters in the current AI era.

Why AI Readiness Is a 2026 Priority

AI systems today are deeply embedded in decision-making, customer experience, automation, and product intelligence. As a result, AI failures are no longer isolated technical issues — they can trigger compliance violations, reputational damage, and operational breakdowns.

Key pressures driving the need for readiness include:

-

AI regulation becoming enforceable, not theoretical

-

Complex model stacks involving third-party APIs, foundation models, and internal systems

-

Increased expectations for transparency and accountability

-

Rising costs of poor AI performance, including model drift and data leakage

Readiness is the difference between controlled innovation and reactive damage control.

What the 2026 AI Readiness Scorecard Evaluates

The scorecard focuses on platform-level capability, not individual experiments. It measures six core dimensions that determine whether AI can be deployed safely and effectively.

1. AI Intent and Organizational Direction

This dimension examines whether AI has a defined purpose inside the organization.

Indicators include:

-

Clear use cases tied to measurable outcomes

-

Executive ownership of AI strategy

-

Alignment between AI initiatives and business priorities

Platforms score poorly here when AI exists as disconnected pilots rather than a strategic capability.

2. Data Architecture and Reliability

AI readiness depends on data that is accessible, trustworthy, and governed.

This section assesses:

-

Data consistency across systems

-

Provenance and traceability of training data

-

Controls for access, retention, and usage

Without reliable data infrastructure, AI outputs become unpredictable and difficult to defend.

3. Model Control and Operational Maturity

In 2026, deploying a model is the easy part. Managing it is the challenge.

This category reviews:

-

Versioning and documentation of models

-

Monitoring for performance decay and drift

-

Processes for updating, rolling back, or retiring models

Strong scores indicate disciplined AI operations rather than ad hoc deployment.

4. Responsible AI and Risk Mitigation

Ethical AI is not just a moral concern — it is a legal and commercial one.

The scorecard evaluates:

-

Bias detection and mitigation practices

-

Explainability for high-impact decisions

-

Internal review processes for AI-related risks

Organizations that ignore this area often face delayed launches or forced shutdowns later.

5. Security, Privacy, and Exposure Management

AI introduces new attack vectors that traditional security models don’t fully cover.

This pillar looks at:

-

Protection of model inputs and outputs

-

Safeguards against prompt injection and data extraction

-

Compliance with data protection and AI-specific regulations

AI-ready platforms treat models as sensitive assets, not black boxes.

6. Workforce Readiness and Adoption

Even the best AI platform fails if people don’t know how to use it.

This section measures:

-

AI literacy across technical and non-technical teams

-

Internal guidelines for responsible AI usage

-

Support systems for adoption and experimentation

Human readiness is often the fastest way to improve overall AI maturity.

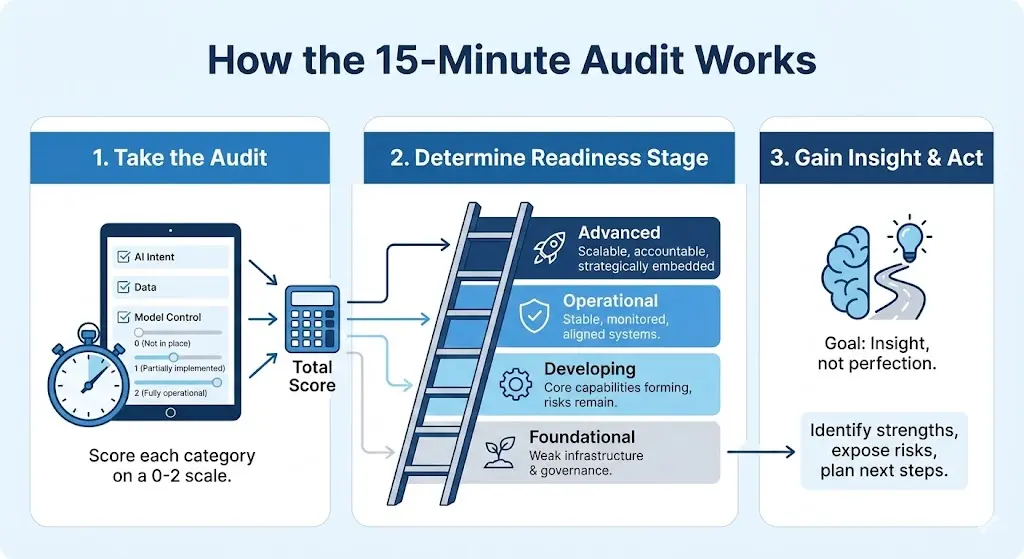

How the 15-Minute Audit Works

Each category contains a small set of targeted questions scored on a simple scale:

-

0 – Not in place

-

1 – Partially implemented

-

2 – Fully operational

When totaled, the score places your platform into one of four readiness stages:

-

Foundational – AI ambition exists, but infrastructure and governance are weak

-

Developing – Core capabilities are forming, but risks remain

-

Operational – AI systems are stable, monitored, and aligned

-

Advanced – AI is scalable, accountable, and strategically embedded

The goal is insight, not perfection.

Turning Scores into Action

The scorecard is most valuable when used as a planning tool.

-

Low scores highlight where to invest next

-

Mid-range scores reveal hidden operational risks

-

High scores help identify competitive advantages worth scaling

Organizations that revisit the scorecard quarterly tend to progress faster and avoid costly AI missteps.

Final Thoughts

AI readiness in 2026 is not about how advanced your models are — it’s about how prepared your platform is to support them responsibly over time. The 2026 AI Readiness Scorecard offers a fast, structured way to assess that preparedness without complex audits or external consultants.

Fifteen minutes of honest evaluation can prevent years of technical debt, compliance risk, and lost trust.

In the age of intelligent systems, readiness is strategy.