The way people shop online is changing—again. First, we optimized for desktop. Then mobile reshaped everything. Chatbots followed, offering conversational discovery through text. Now, a new frontier is quickly becoming mainstream: audio-first shopping.

Voice assistants, AI-powered shopping agents, and conversational commerce platforms are transforming how consumers search, compare, and buy products. Instead of scrolling through pages of results, shoppers are asking questions out loud and expecting immediate, accurate answers. This shift doesn’t just change user interfaces—it fundamentally changes how product information must be structured.

At the center of this transformation lies something many brands still underestimate: metadata.

If your product data was built for screens and clicks, it may not be ready for ears and conversations. This article explores what audio-first shopping really means, why metadata is critical in this evolution, and how businesses can prepare now—before voice becomes the dominant shopping interface.

The Rise of the Audio-First Shopper

Audio-first shoppers don’t browse the way traditional eCommerce users do. They don’t scan grids, compare thumbnails, or skim bullet points. Instead, they ask natural-language questions like:

-

“What’s the best noise-canceling headphone under $200?”

-

“Is this shampoo safe for color-treated hair?”

-

“Which coffee maker is easiest to clean?”

Voice-based systems must interpret these questions and respond with one or very few answers. That’s a major departure from the ten blue links or product grids we’re used to.

For brands, this means competition is no longer about being on the page—it’s about being the answer.

And that’s where metadata becomes mission-critical.

Why Metadata Matters More in Voice Than in Chatbots

Chatbots already pushed brands toward conversational data, but audio raises the stakes even higher.

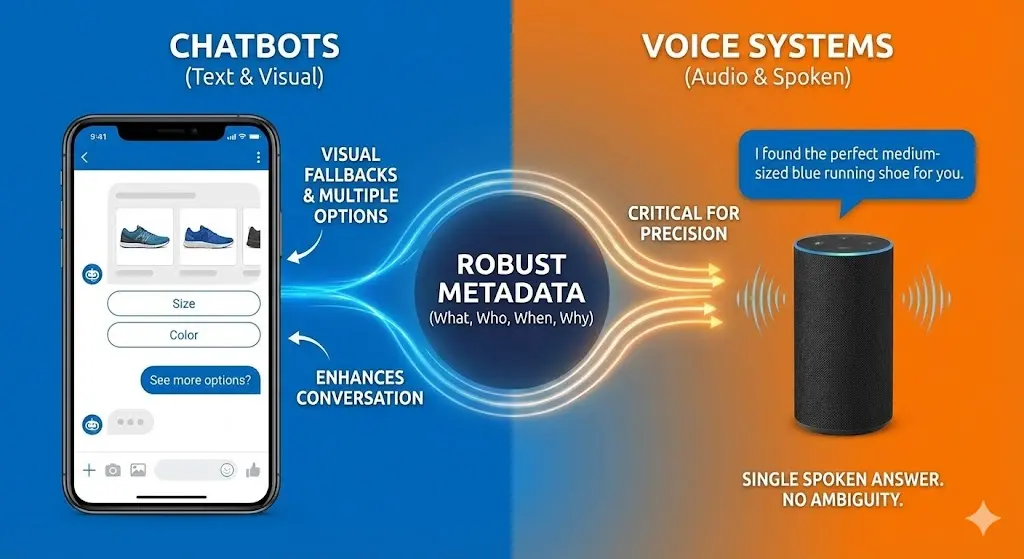

Text-based chatbots can display multiple options, show product cards, or ask follow-up questions visually. Voice systems, on the other hand, must often respond in a single spoken sentence. There is no room for ambiguity, missing attributes, or poorly structured information.

Metadata is what tells AI systems:

-

What your product is

-

Who it’s for

-

When it should be recommended

-

Why it’s relevant to a specific question

Without robust metadata, even the best product can become invisible in voice-driven commerce.

Rethinking Metadata for Conversations, Not Catalogs

Traditional product metadata was built for databases and filters. Audio-first shopping requires metadata built for conversations.

That means shifting from rigid attributes to context-aware information.

1. Intent-Based Descriptions

Voice shoppers don’t ask for SKUs or technical names. They describe needs, problems, and outcomes.

Instead of only listing:

-

“Material: Stainless Steel”

-

“Capacity: 12 oz”

You need metadata that supports questions like:

-

“Is this safe for hot drinks?”

-

“Will this fit in a car cup holder?”

Preparing for audio-first means enriching metadata with use cases, benefits, and scenarios, not just specifications.

2. Natural Language Synonyms and Variations

People don’t speak the way databases are labeled.

One shopper might say:

-

“Running shoes”

Another might ask: -

“Jogging sneakers”

Or: -

“Shoes for daily runs”

Audio systems rely on metadata that connects all these phrases to the same product category. Without this linguistic flexibility, your products may never surface in voice queries.

Building synonym-aware metadata is no longer optional—it’s foundational.

3. Structured Answers, Not Just Attributes

Voice assistants often look for direct answers, not raw data.

For example:

-

Attribute: “Battery life: 10 hours”

-

Voice-ready answer: “This device lasts up to 10 hours on a single charge.”

Metadata should increasingly include answer-ready phrasing that AI systems can confidently read aloud without reinterpreting.

This reduces errors, improves trust, and increases the likelihood your product is selected.

Audio-First Shoppers Value Trust and Clarity

When a voice assistant recommends a product, it acts as an intermediary. The shopper is placing trust not only in the AI, but indirectly in your brand.

Metadata plays a silent but powerful role in building that trust.

Key trust signals that voice systems prioritize:

-

Clear compatibility information

-

Safety and compliance details

-

Verified reviews or ratings summaries

-

Transparent pricing and availability

If this information is incomplete or inconsistent, voice systems may skip your product entirely to avoid risk.

How Search Optimization Is Becoming Answer Optimization

Audio-first shopping accelerates the move from traditional Search Engine Optimization (SEO) to Answer Engine Optimization (AEO).

In this world:

-

Ranking #3 is meaningless if only one answer is spoken

-

Metadata must support direct, concise, authoritative responses

-

Long-winded descriptions are less valuable than precise clarity

Brands should begin asking:

-

Can our metadata answer “why,” not just “what”?

-

Does it help an AI confidently recommend our product?

Preparing Your Metadata Strategy Today

You don’t need to rebuild everything overnight—but you do need to start intentionally.

Step 1: Audit for Voice Readiness

Review your existing product data and ask:

-

Can this be read aloud naturally?

-

Does it answer common shopper questions?

-

Is critical context missing?

Step 2: Enrich with Contextual Attributes

Go beyond size and color. Add metadata fields for:

-

Ideal user type

-

Common problems solved

-

Situational use (travel, home, outdoor, professional)

Step 3: Align Metadata Across Channels

Voice assistants pull data from multiple sources—your website, marketplaces, APIs, and feeds. Inconsistent metadata creates confusion and reduces confidence.

Consistency is not just good practice; it’s a requirement for voice visibility.

Looking Ahead: Metadata as a Competitive Advantage

As audio-first shopping grows, metadata will no longer be a backend concern—it will be a frontline differentiator.

Brands that invest early will benefit from:

-

Higher visibility in voice commerce

-

Stronger AI recommendations

-

Reduced dependence on paid placements

-

Deeper customer trust

Those that delay may find themselves perfectly designed for screens—but completely silent in conversations.

Final Thoughts

The journey from chatbots to voice is not just a technology shift—it’s a mindset shift. Shopping is becoming more human, more conversational, and more immediate. Metadata is the bridge between your products and these new experiences.

By preparing your metadata for the audio-first shopper today, you’re not just adapting to change—you’re positioning your brand to be heard, trusted, and chosen in the future of commerce.

The question is no longer if shoppers will talk to technology.

It’s whether your products will know how to answer back.