As e-commerce evolves, businesses increasingly rely on autonomous AI agents to manage complex tasks—from processing orders and optimizing pricing to handling customer service. These AI-driven systems promise efficiency, cost savings, and personalized experiences, but they also raise a pressing question: Who is legally responsible when an AI agent fails?

Understanding liability in autonomous e-commerce isn’t just a theoretical concern. As AI takes on more independent decision-making, failures can lead to financial losses, reputational harm, or even regulatory penalties. This blog explores the legal and ethical dimensions of AI liability in online commerce, helping businesses, developers, and consumers navigate this emerging terrain.

What Constitutes an AI Failure in E-commerce?

An AI failure occurs when an autonomous agent acts in a way that produces undesired outcomes, causing harm or loss. In e-commerce, this could include:

-

Order errors: AI mismanages stock, double charges customers, or cancels orders incorrectly.

-

Fraud or security breaches: An AI system fails to detect fraudulent transactions or exposes sensitive data.

-

Pricing and promotion mistakes: Algorithmic errors lead to overcharging, undercharging, or illegal discounting.

-

Customer service failures: AI chatbots provide misleading or harmful advice.

The complexity of these systems can make it difficult to trace failures directly to human action, leading to complicated questions of legal liability.

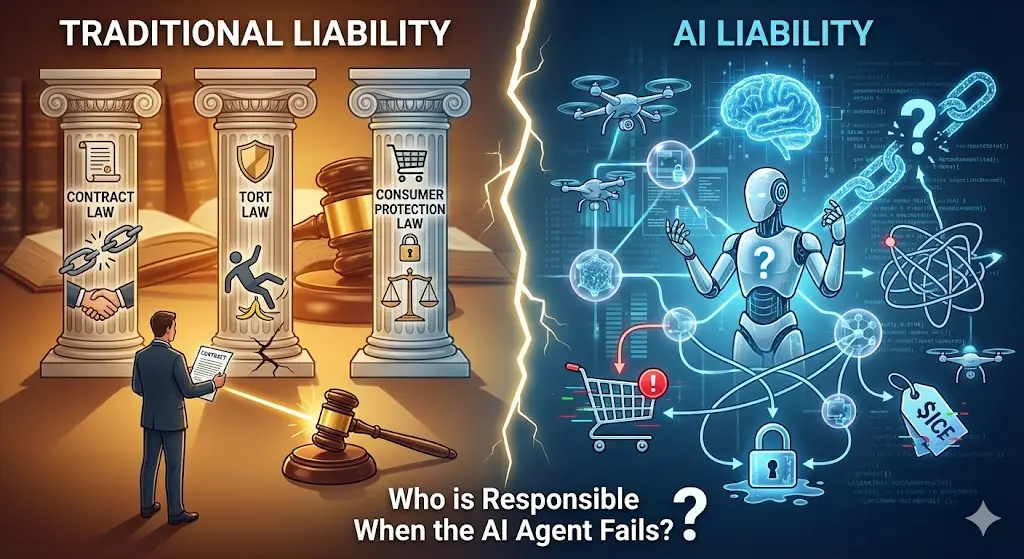

Traditional Liability vs. AI Liability

In traditional e-commerce, liability usually falls under well-established frameworks:

-

Contract law: Breach of agreement with customers or suppliers.

-

Tort law: Negligence or intentional harm.

-

Consumer protection law: Compliance with regulations governing fair business practices.

However, autonomous AI challenges these frameworks because AI agents can act independently, make decisions in real time, and continuously learn from data. When a failure occurs, it may be difficult to assign clear responsibility under conventional law.

Who Could Be Held Responsible?

Several parties may face liability depending on the context of the AI failure:

1. The E-commerce Business

Typically, businesses remain ultimately responsible for their operations. If an AI agent causes harm to a customer, courts may hold the company accountable, even if the fault originated with the AI software. Businesses are expected to exercise reasonable oversight of their AI systems.

Example: A pricing algorithm sets an incorrect price for high-value products, causing customer losses. The company may face claims of breach of contract or deceptive pricing.

2. AI Developers or Vendors

If an AI system contains inherent defects, coding errors, or unsafe decision-making frameworks, developers or third-party vendors could be liable. Liability may hinge on whether the business relied on the system in good faith or whether the developers failed to meet industry standards.

Example: A vendor provides an AI inventory system that mismanages orders due to a known bug, leading to financial loss. The vendor may share liability with the business deploying it.

3. Shared Liability

In many cases, liability is shared, especially in contracts that include AI services. Businesses and vendors often allocate risk via service-level agreements (SLAs) and liability clauses, specifying responsibility for failures, errors, or breaches.

Ethical consideration: Even if liability is legally assigned to the vendor, businesses have an ethical duty to protect customers and mitigate harm.

4. Regulatory Responsibility

Regulators increasingly require businesses to demonstrate accountability for AI systems. For instance:

-

EU AI Act (proposed): Introduces obligations for high-risk AI systems, including transparency, traceability, and human oversight.

-

U.S. Federal Trade Commission: Enforces AI fairness and anti-deception standards.

Failure to comply with regulatory requirements can lead to administrative fines, litigation, or reputational damage, regardless of who technically “caused” the AI failure.

Ethical Implications of AI Failures

Beyond legal liability, autonomous AI raises ethical concerns:

-

Transparency: Customers often have little insight into how AI systems make decisions. Ethical AI requires explainable algorithms.

-

Fairness: AI failures may disproportionately impact vulnerable populations, raising equity concerns.

-

Accountability: Organizations must decide who is ethically responsible when AI causes harm.

-

Risk management: Businesses have a duty to proactively prevent AI failures and provide remedies when they occur.

Companies that fail to address these ethical concerns may face long-term loss of trust, even if legal liability is limited.

Strategies to Manage AI Liability

Businesses can take several steps to reduce legal and ethical risks:

-

Implement human oversight: Ensure humans monitor AI decisions, especially in high-risk areas like payments or security.

-

Document decision-making: Maintain logs and records that demonstrate how AI systems arrive at decisions.

-

Use robust contracts: Allocate liability clearly in agreements with AI vendors.

-

Regular testing and audits: Conduct frequent AI performance audits to detect errors before they cause harm.

-

Insurance coverage: Consider technology errors and omissions insurance to cover AI-related incidents.

-

Compliance frameworks: Align AI systems with emerging regulations and ethical guidelines.

The Future of AI Liability in E-commerce

As AI continues to evolve, legal systems worldwide will face pressure to adapt. Some experts advocate for:

-

AI-specific liability laws: Creating frameworks that assign responsibility for autonomous agent failures.

-

AI personhood debates: Philosophical discussions on whether highly autonomous AI could assume limited legal responsibility.

-

Global standards: Harmonizing rules to prevent cross-border liability disputes in e-commerce.

While these developments are still emerging, businesses that adopt proactive oversight and ethical practices will be better positioned to navigate risks.

Conclusion

Autonomous AI agents in e-commerce offer remarkable opportunities but come with serious legal and ethical responsibilities. When AI fails, liability may involve the business, developers, vendors, or even regulatory authorities. Understanding these dynamics is essential for companies to manage risk, protect customers, and comply with evolving laws.

Ultimately, the question of responsibility in AI failures goes beyond law—it’s also about trust, ethics, and accountability in a world where machines increasingly make decisions that impact people’s lives and finances. Businesses that take these considerations seriously will not only reduce liability but also strengthen customer confidence in the age of autonomous commerce.