The EU AI Act is no longer a distant regulatory concept—it’s a near-term reality that will reshape how e-commerce companies design, deploy, and scale AI. Whether your organization uses AI for product recommendations, dynamic pricing, fraud detection, chatbots, or supply chain optimization, the Act affects you. For executives, the challenge is both strategic and operational: how do you stay compliant without slowing down the innovation that keeps you competitive?

This guide breaks down what the EU AI Act really means for e-commerce leaders and offers practical insights on navigating the line between compliance and innovation.

Why E-Commerce Leaders Must Pay Attention

E-commerce is one of the most AI-intensive industries. Nearly every stage of the digital retail journey involves some form of automation—often without customers realizing it. The EU AI Act is designed to make this more transparent and accountable.

For executives, ignoring the Act is not an option. Non-compliance can result in fines measured in millions of euros or as a percentage of global revenue, similar to the GDPR. But beyond penalties, the Act influences customer trust, cross-border operations, and future technology roadmaps. In a sector where margins are tight and competition is fierce, being caught off guard could be costly.

Understanding the EU AI Act in Simple Terms

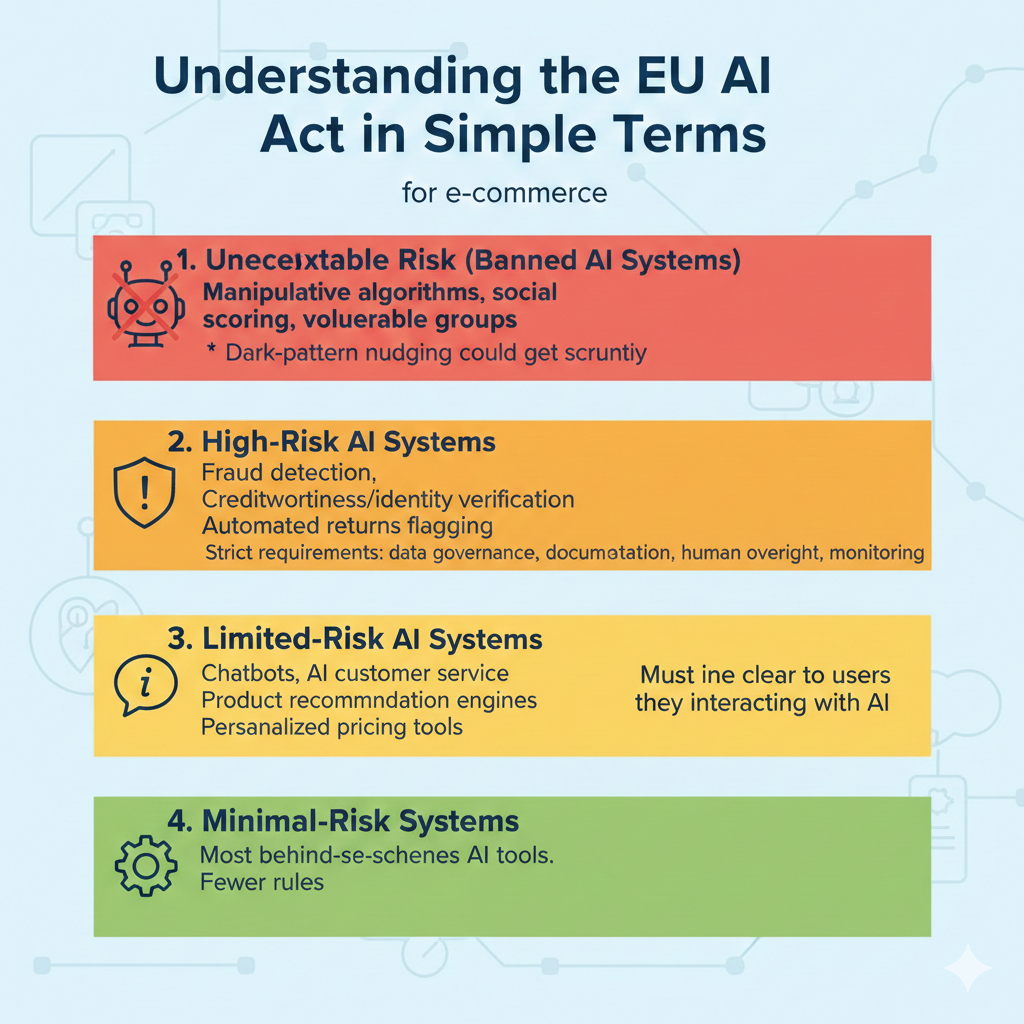

The Act classifies AI systems into four tiers of risk. Not every AI used in e-commerce falls into a high-risk category, but many do. Here’s the simplified breakdown:

1. Unacceptable Risk (Banned AI Systems)

These include manipulative algorithms, social scoring tools, or systems designed to exploit vulnerable groups.

Most e-commerce applications don’t fall here, but dark-pattern-driven AI nudging could get scrutiny.

2. High-Risk AI Systems

Systems that significantly affect customer rights or financial outcomes. For e-commerce, this often includes:

-

Fraud detection algorithms

-

Creditworthiness or identity-verification systems

-

Automated decision-making for returns flagged as “suspicious.”

-

AI is used in logistics or worker management.

High-risk systems are subject to strict requirements: data governance, documentation, human oversight, and continuous monitoring.

3. Limited-Risk AI Systems

AI that interacts directly with users and requires transparency, such as:

-

Chatbots

-

AI customer service agents

-

Product recommendation engines (depending on use case)

-

Personalized pricing tools

These systems must make it clear to users that they are interacting with AI.

4. Minimal-Risk Systems

Most behind-the-scenes AI tools fall here and face fewer rules.

Key Areas of Impact for E-Commerce Companies

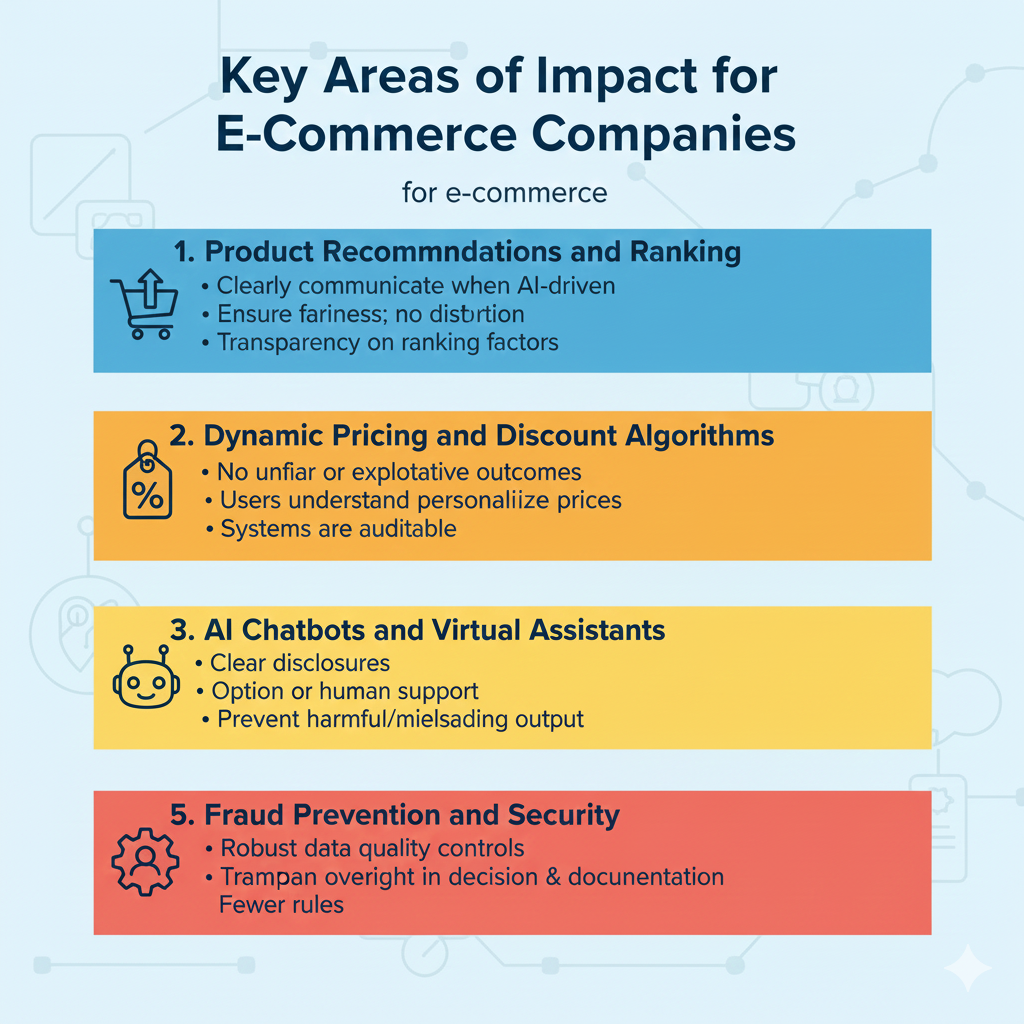

1. Product Recommendations and Ranking

AI-driven rankings influence what customers see and buy. Under the Act, platforms must:

-

Clearly communicate when recommendations are AI-driven.

-

Ensure ranking models do not unfairly distort consumer behavior.

-

Provide transparency about the factors influencing recommendation outcomes

This doesn’t ban personalization—but it requires clarity and accountability.

2. Dynamic Pricing and Discount Algorithms

Dynamic pricing sits in a gray zone. Used responsibly, it’s a powerful revenue tool. Used poorly, it can become discriminatory.

Executives need to ensure:

-

AI pricing models do not result in unfair or exploitative outcomes.

-

Users understand when prices are personalized.

-

Systems are auditable, especially if EU regulators request documentation

3. AI Chatbots and Virtual Assistants

Under the Act, customers must know when AI—not a human—is interacting with them. This means:

-

Clear disclosures

-

Options to escalate to human support

-

Strong controls to prevent harmful or misleading output

4. Fraud Prevention and Security

Fraud detection tools often process sensitive personal data, which places them in the high-risk category.

Compliance requirements include:

-

Robust data quality controls

-

Transparency in how fraud decisions are made

-

Human oversight for critical decisions

-

Documentation proving fairness and accuracy

5. Supply Chain and Workforce Algorithms

Some internal AI tools—like those used to optimize staff scheduling or performance—are also regulated if they impact workers’ rights.

Balancing Compliance and Innovation

The biggest fear among executives is that the EU AI Act will slow the pace of technological progress. But the Act also creates opportunities for competitive differentiation.

Here’s how to strike the right balance:

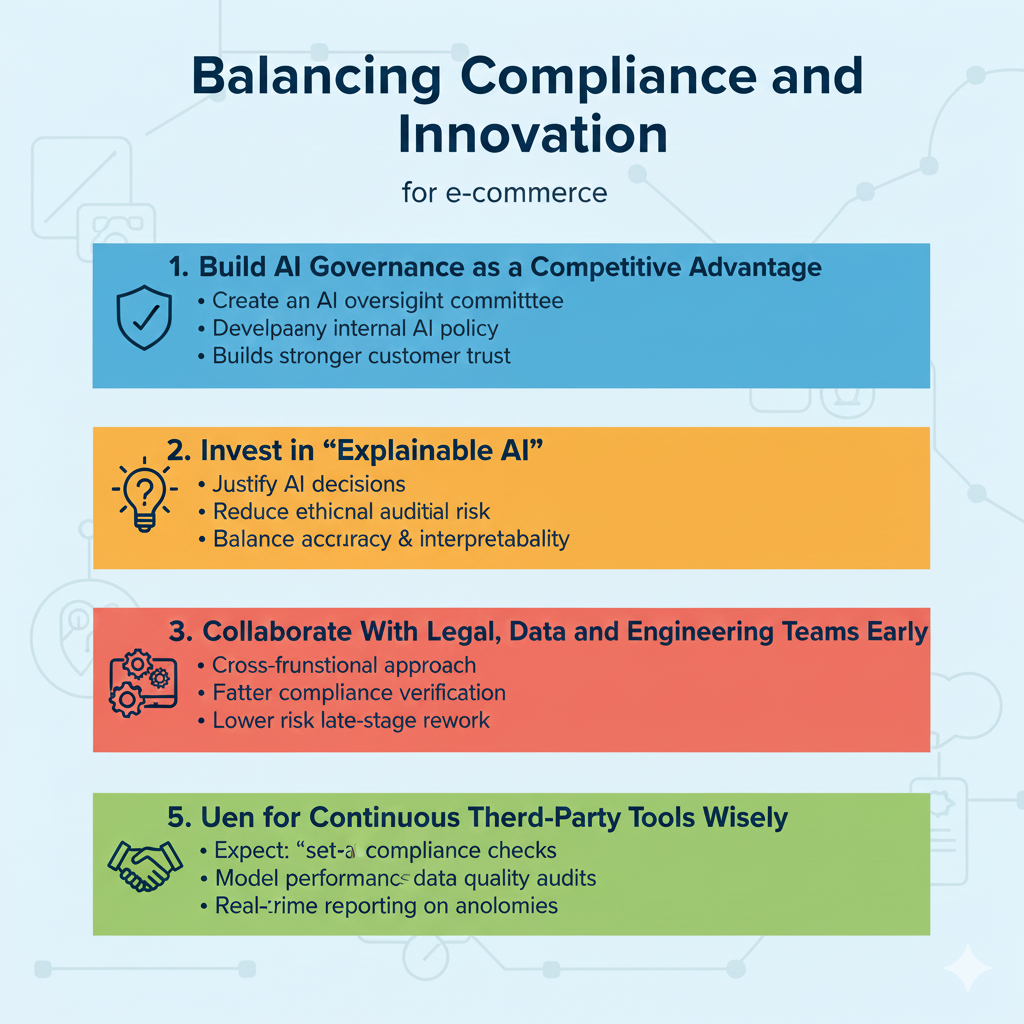

1. Build AI Governance as a Competitive Advantage

Companies that manage AI responsibly can build stronger customer trust.

Strategic steps include:

-

Creating an AI oversight committee

-

Implementing AI risk assessments

-

Developing an internal AI policy that aligns with EU standards

Well-governed AI is not just compliant—it’s more reliable.

2. Invest in “Explainable AI.”

Regulators and customers increasingly want transparency.

Explainable AI helps:

-

Justify AI decisions (especially pricing or fraud classifications)

-

Improve internal auditability

-

Reduce ethical and reputational risk.

Executives should prioritize AI models that strike a balance between accuracy and interpretability.

3. Collaborate With Legal, Data, and Engineering Teams Early

The days of developing AI models in isolation are over.

A cross-functional approach ensures:

-

Faster compliance verification

-

Lower risk of late-stage rework

-

Better integration between business, technology, and regulatory requirements

4. Use Vendor and Third-Party Tools Wisely

If your e-commerce stack relies on AI from external vendors, you are still responsible for compliance.

Ask vendors:

-

What risk category does their AI fall under

-

Whether they provide documentation aligned with the EU AI Act

-

How their models handle bias, accuracy, and oversight

Vendor due diligence becomes essential.

5. Plan for Continuous Monitoring

AI is not a “set-and-forget” technology—especially under EU law.

Executives should expect:

-

Annual or periodic compliance checks

-

Model performance reviews

-

Continuous data quality audits

-

Real-time reporting on anomalies

This ongoing commitment helps prevent regulatory or reputational surprises.

Turning Compliance Into a Differentiator

Companies that master the EU AI Act early will enjoy significant advantages:

-

Higher customer trust due to transparent AI practices

-

Faster expansion across EU markets with fewer regulatory barriers

-

Reduced risk exposure from algorithmic errors or complaints

-

Enhanced brand reputation as a responsible innovator

In a digital marketplace where customers are increasingly concerned about fairness and privacy, ethical AI could become a key selling point.

Final Thoughts

The EU AI Act is not simply another compliance hurdle—it’s a catalyst for more trustworthy and customer-centric AI in e-commerce. For executives, the goal is not to choose between innovation and compliance but to integrate both into a cohesive strategy.

By building transparent, accountable, and human-centered AI systems, e-commerce companies can continue to innovate aggressively while maintaining the confidence of customers, regulators, and partners.

In the new era of AI-powered commerce, compliance isn’t a barrier—it’s a foundation for long-term innovation and growth.